Case Study

Exo Handling an AI workflow for complex business aviation ground handling operations

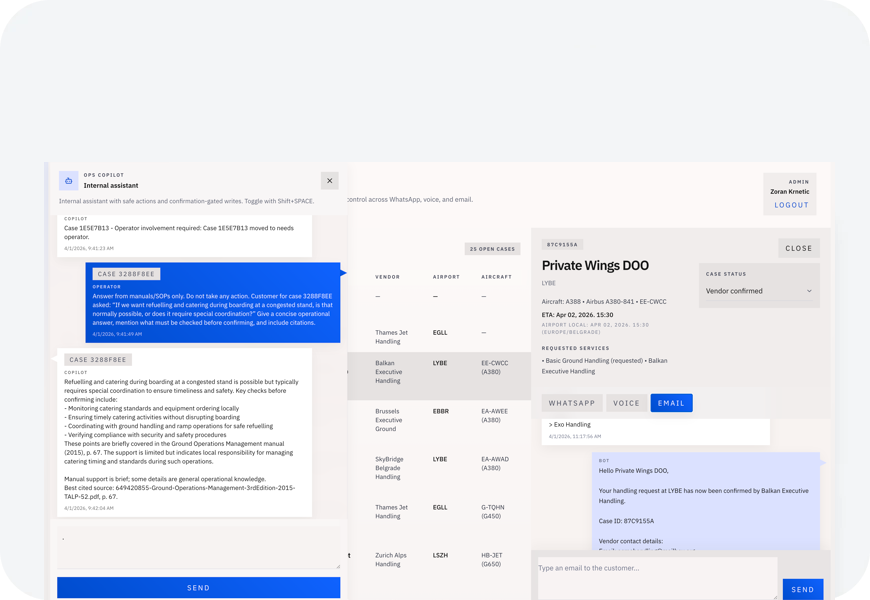

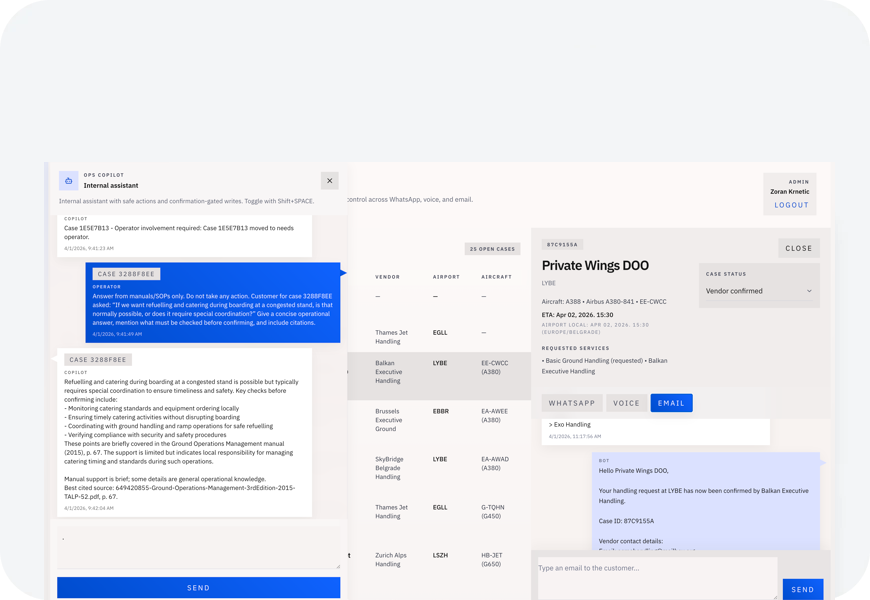

A working AI-native operations workflow for business aviation: multi-channel customer intake, shared case orchestration, vendor coordination, a RAG-backed Ops Copilot, and a supervising-agent pattern designed for human-controlled automation in complex, real-world operations.

Working system

built as a real operations workflow, not a chatbot demo

3

customer channels: WhatsApp, voice, and email

Human-controlled

automation bounded by approvals, supervision, and clear escalation

The Problem

Real operations do not happen in one inbox, one channel, or one clean workflow

Business aviation ground handling and flight support involve time-sensitive coordination across customers, operators, vendors, and multiple communication channels. In practice, that often means fragmented requests, manual follow-up, scattered context, and too much operational knowledge living in people's heads instead of in the system.

The challenge was not to create another AI demo. The challenge was to shape a workflow that could intake requests, centralize case context, support vendor coordination, and use AI in a way that stays grounded, auditable, and useful to a human operator.

Overview

A working AI workflow designed for complex operations

Exo Handling is an AI-powered operations workflow for non-scheduled aviation ground handling and flight-management teams. It shows how OpenAI GPT-5.4 can be used as a primary build partner to frame, design, implement, and iterate a credible operational product shaped around real workflow complexity.

The result was not a mockup, deck, or isolated AI assistant. It was a working system with customer-facing intake over WhatsApp, voice, and email, a shared case-management workspace, vendor confirmation workflows, and an internal Ops Copilot that can read case state, answer grounded operational questions, follow up with customers, and propose improvements to customer agents.

Build Approach

The goal was not to prove that AI can help. The goal was to prove it can accelerate serious product delivery.

Exo Handling was scoped as an AI-native product build: not a pitch deck, not a concept mockup, and not a single chat interface, but a connected operational workflow with clear system boundaries, supervision, and real-world credibility.

- multi-channel customer intake instead of a single demo chat

- database-backed case records instead of loose conversation state

- vendor coordination instead of only customer messaging

- retrieval-backed ops support instead of fluent but unsupported answers

- human approval and supervision instead of unconstrained automation

What matters here is not raw speed for its own sake, but what AI changes in the delivery equation: implementation becomes dramatically faster, so architecture, workflow boundaries, and supervision patterns matter even more.

What Was Built

Four layers working together as one operations workflow

The customer-facing layer handles routine intake and status flow. The case-management layer centralizes context. Vendor workflows manage confirmations and alternative handler logic. The internal copilot acts as a second operational layer for questions, follow-up, monitoring, and supervision.

Together, these layers create a more usable operational system: faster intake, clearer case visibility, better support for human decision-making, and controlled automation where actions still need approval.

RAG and Grounded Ops Support

The internal copilot was designed to answer from retrieved knowledge, not guess

Retrieval First

Manuals and operational documents are chunked and indexed so the system can pull relevant material before answering.

Citations

Ops Copilot can return answers with source references, which makes the output more defensible and auditable in an aviation-adjacent workflow.

Case-Aware

The copilot can combine retrieved knowledge with live case and workflow data to answer practical operational questions.

Human-Usable

The goal is not a clever-sounding agent. The goal is an internal assistant that helps a human operator make better, faster decisions.

The Strongest Pattern

Ops Copilot was not just a chat assistant. It was a supervising agent.

The most interesting architectural move in Exo Handling was the supervising-agent pattern. Customer agents handle front-line intake and constrained tasks, but the internal Ops Copilot does more than wait for prompts.

- monitor workflow events, watches, and notification signals

- step in where customer automation reaches a safe boundary

- propose improvements to customer agents for human approval

- keep important writes confirmation-gated

That layered control model feels closer to how AI becomes useful in real operational businesses: not one super-agent doing everything, but bounded agents with supervision, retrieval, tooling, and a human still in control.

Why this matters

Many AI demos stop at a customer bot. Exo Handling goes a step further: an internal copilot that can observe, guide, and improve customer agents over time. That is closer to how AI becomes useful in real operational businesses — as a supervised layer inside the workflow, not just a conversational surface.

Demo

Watch the workflow in action

The demo shows customer requests arriving across channels, cases being created and updated, vendor confirmations and fallback logic, live voice updates, and the internal copilot handling case questions, watches, and improvement proposals.

Why It Matters

The takeaway is not speed alone. It is how AI changes the shape of serious operational software.

Complete operational workflows can now be delivered far faster than before.

GPT-5.4 was not only useful for snippets. It helped shape the backend, frontend, prompts, workflows, and iteration loops across the full product. That shortens the path from idea to working system — especially when product, UX, and implementation are tightly integrated.

RAG matters more when the workflow is operational.

In domains where procedures and manuals matter, fluent answers are not enough. Retrieval and citations make the internal copilot more trustworthy.

Supervising agents are more interesting than single-agent demos.

The strongest pattern here is a supervisory layer that can monitor and improve customer automation instead of only generating answers on request.

Human approval remains essential for consequential work.

The system becomes more useful when it is allowed to help with action, but still bounded by confirmation-gated writes and clear escalation points.

Credits

A case study in designing and building AI workflows for complex operations

Project

Exo Handling

Product, Design, Build

Happy Path Solutions

Primary AI Build Partner

OpenAI GPT-5.4

Key Architectural Themes

RAG, supervising agents, confirmation-gated writes